Pose Estimation

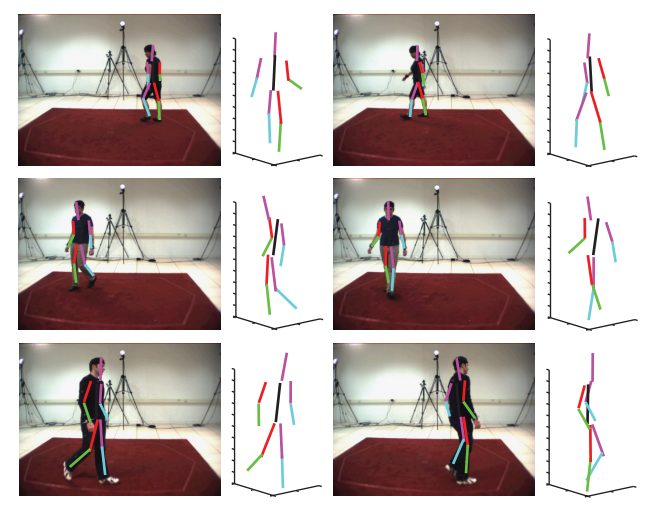

3D human pose estimation is a very difficult task. We propose that this problem can be more easily solved by first finding the solutions to a set of easier sub-problems. These are to locally estimate pose conditioned on a fixed root node state, which defines the global position and orientation of the person. The global solution can then be found using information extracted during this procedure. This approach has two key benefits: The first is that each local solution can be found by modeling the articulated object as a kinematic chain, which has far less degrees of freedom than alternative models. The second is that by using this approach we can represent, or support, a much larger area of the posterior than is currently possible. This allows far more robust algorithms to be implemented since there is far less pressure to prune the search space to free up computational resources. We apply this approach to two problems: The first is single frame monocular 3D pose estimation, where we propose a method to directly extract 3D pose without first extracting any intermediate 2D representation or being dependent on strong spatial prior models. The second is multi-view 3D tracking where we show that using the above technique results in an approach that is far more robust than current approaches, without relying on strong temporal prior models. In both domains we demonstrate the strength and versatility of the proposed method.

|

- IVC Journal Paper Fixing the Root Node: Efficient Tracking and Detection of 3D Human Pose through Local Solutions.

- Source codes are available to download.